Patent application of University of Applied Sciences Aschaffenburg for the abstraction of sensor data

KI Data Tooling has filed its first patent application. Hannes Reichert (University of Applied Sciences Aschaffenburg, UAB) and Konrad Doll (UAB) report on the motivation, design considerations, and their general vision of sensor data abstraction methods in automotive perception systems.

Let's start with the motivation: What is sensor data abstraction, and why is it necessary?

Collecting and preparing the data for AI functions requires a considerable amount of time and engineering effort. Often the sensor setups are not changed after the initial setup of a recording vehicle. This leads to a lack of data regarding the variability of sensors used. However, this variability is mandatory to avoid a bias of the AI functions to the sensor(s) used. Currently, switching a sensor after designing the AI functions is not trivial.

This is where sensor data abstraction comes in. Sensor data abstraction is a work package in KI Data Tooling aiming to reduce this sensor bias.

What is your approach?

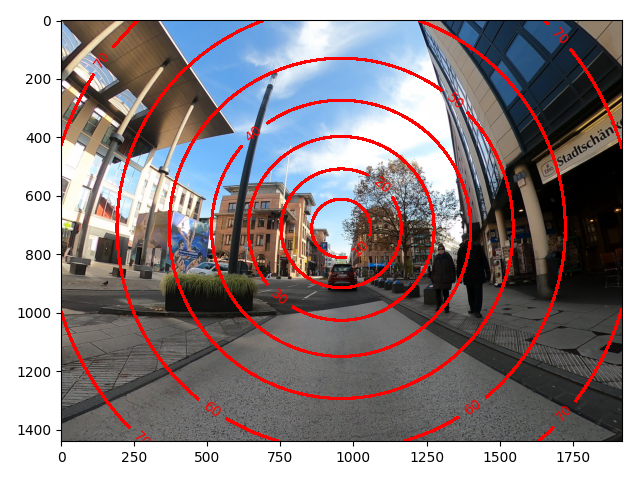

A projection (i. e., images) yields ambiguities in size and shape of objects, introduced by the sensors used. This spans an enormous space of how objects like pedestrians can be represented in sensor data. While relying on the generalization properties of AI functions for the appearance (i. e. clothing), we decided to encode the projection properties alongside the sensor data and use it as context.

In a nutshell, we determine a deflection metric for every pixel, which contains an angle how a beam of light hits the sensor, relative to the principal axis of the sensor. We can treat this deflection metric as an additional image channel and process it with the sensor data by convolutional layers. This allows for processing with convolutional neural networks. Furthermore, this limits the possibility space, leading to faster convergence and better generalization on novel sensors with different characteristics.

And finally: What are the fields of application?

Initially, we developed the method for camera data, for which we are currently working on a publication. However, it turned out that this method is also well suited for LiDAR data. Using the depth measures of the LiDAR together with the deflection metric allows treating LiDAR like a camera and enables processing by state of the art image processing methods while retaining 3D information. Thus, bringing LiDAR data processing closer to the state of the art of camera data.

Image: TH Aschaffenburg